At CES 2026, Nvidia revealed the Vera Rubin platform, and said it’s the next step after the very successful Blackwell structure, and the centre of the next generation of AI data centres. Rubin is expected to greatly improve AI training and inference speed, and bring down running costs.

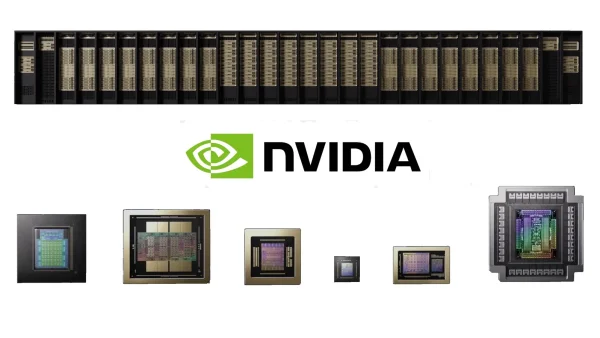

Six Chips, One Supercomputer

The Rubin platform includes the following:

– Vera CPU: A Nvidia CPU made for moving data, and “agentic” AI tasks.

– Rubin GPU: The main AI processor, giving up to 50 petaflops of NVFP4 AI ability – roughly five times the AI ability of the older Blackwell GPUs.

– NVLink 6 Switch: The newest scale-up connection between CPUs and GPUs, with extremely high bandwidth to stop problems with communication.

– ConnectX-9 SuperNIC: A fast network card with programmable acceleration for data movement and to manage congestion.

– BlueField-4 DPU: A data processing unit which takes the load off infrastructure functions and supports secure computing.

– Spectrum-6 Ethernet Switch: A top Ethernet switch, with optics built in, and huge throughput for scale-out networking.

Rack-Scale Focus and Extreme Co-Design

These parts are intended to work as a single AI supercomputer, not separate chips that aren’t strongly linked. This very close co-design of processing, networking and system fabrics is key for efficient scaling in data centres.

Rubin is built on the idea that AI speed isn’t just about the computing chips themselves. The platform has been engineered so that CPUs, GPUs, networking, and data processing are all co-optimised from the beginning – a change from the older way of doing things where chips and networking were often designed separately.

This means rack-scale systems like the NVL72 can be made, where large groups of GPUs and CPUs operate in a single, closely linked area with very high overall bandwidth, and shared memory that works together.

Performance and Efficiency Claims

An advantage of this design is that Rubin can train large AI models using about a quarter of the GPUs needed by similar Blackwell systems, and can cut the cost for each inference token by up to ten times.

Nvidia says the Rubin GPU provides up to five times the inference speed of Blackwell, thanks to its new NVFP4 precision, bigger silicon, and 22 TB/s of HBM4 memory bandwidth.

The Vera CPU, with 88 custom cores and 176 threads, gives excellent throughput for things like data organisation, memory handling and advanced AI tasks.

Together, these parts aim to greatly speed up both training and running of large language models (LLMs), mixture-of-experts (MoE) structures, and other advanced AI tasks – with large drops in total cost of ownership (TCO).

Production and Timeline

At CES 2026, Nvidia’s CEO Jensen Huang said the Rubin platform is going into full production and is on schedule for being used later in the year. But, industry experts point out that this usually starts with small-volume runs before moving to full availability.

Software and Ecosystem Support

Rubin’s hardware is supported by Nvidia’s software system, including libraries, frameworks and development tools which allow data scientists and engineers to optimise for large AI models. Rubin will work with existing Nvidia CUDA tools, while also enabling new features in AI storage, memory handling, and model workflows that weren’t in earlier versions.

Comparisons and Industry Trends

Moving to full-stack rack systems isn’t just Nvidia – competitors like AMD and Huawei are also doing similar things. For example, AMD’s Helios platform puts systems at rack scale, with an emphasis on openness and working with partners. Huawei’s CloudMatrix and Atlas SuperPoD lines show a domestic stack approach driven by export controls, covering chips, systems and software.

However, Nvidia’s close integration of processing, networking and system fabrics – and its push for well-defined reference designs like DGX and NVL72 – shows a more controlled system, compared with AMD’s relatively open, partner-based approach.