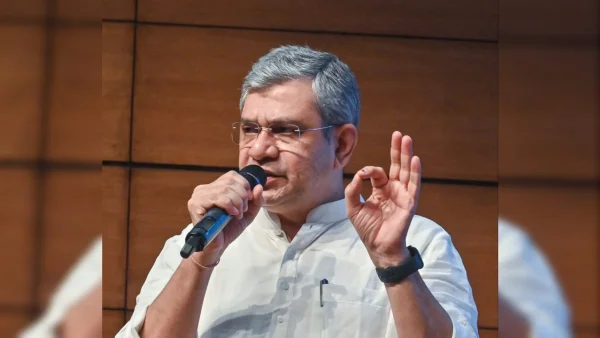

The Union Minister for Electronics and Information Technology – Vaishnaw – wants platforms to really take charge of the content they provide, stating that the safety of the internet and confidence in groups and organisations depends on what platforms do. He expressed worry about the wrong use of new technologies, and asked for better protection of both children and all people.

Platforms should be responsible for the content they have

At a tech meeting in New Delhi, Vaishnaw said platforms need to ‘understand’ and to build up the public’s faith in organisations which have been built up over many years. He stated platforms cannot think of providing content as something they do without taking any part in it, when damage is likely and happens often.

He made clear that if basic safety rules are not followed, platforms will be held to account. The minister said the internet has changed, and so have the duties of companies which run large digital services.

Faith in groups and the changing world of information

The minister based his words on faith, saying that social organisations depend on being believed, on checking facts, and on being held to account. When platforms allow false information or content which has been changed, that basic faith is reduced in families, in society as a whole, and in the legal system.

New technologies make that risk even greater. Computer-made videos and audio, and automatic spreading of information, can make false happenings look real, causing damage to reputations and to public debate. Vaishnaw said society expects platforms to respect and to protect that faith.

Keeping children safe, and protecting people online

Vaishnaw put the online safety of children as the main duty of platforms. He asked platforms to make rules which stop children seeing things which are damaging, and from being sexually exploited, whilst still respecting privacy and content which is legal.

Platforms should use age checking, strong checking of content, and controls for parents, the minister said. He asked for better ways of reporting, quick removing of harmful content, and working with the government to protect children and people who are easily harmed.

Making rules for computer-made content, and ‘deepfakes’

The main point was the wrong use of artificial intelligence to make ‘deepfakes’ and computer-made content. Vaishnaw said such content should not be made without the agreement of the person whose face, voice or personality is used.

He asked for a large change in the way computer-made content is governed. The minister asked platforms to make agreement necessary, to put marks on where content comes from, and to use clear tools so people can see if media has been changed by computers and to check it is real.

Things platforms and those who make rules can do in practice

Vaishnaw asked for a mix of technical, rule-based, and co-operative actions. Platforms should make their terms of service clearer, make human checking of content stronger, and publish reports which are clear on what decisions have been made in checking content, and on the effects of computer programs.

Technical actions include marking where content comes from, making watermarks necessary on content made by computers, and investing in computer and human checking of content which has been changed. The minister also suggested information campaigns to make people better at using the internet.

Governments and platforms should work with groups in society, researchers, and groups which protect children. Clear legal rules, fair enforcement, and working together across countries will help deal with wrong use whilst protecting free speech.

A call to action for the industry and those who make policy

Vaishnaw said platform responsibility was both something the law required and something which was morally right. He asked companies to respect society’s request for safer digital spaces, and to make rules which put the value of people and faith first.

The minister’s words show a policy is going towards platforms being held to account for what content they have, for online safety, and for dealing with computer-made content in a clear way. For platforms, the message is simple: act now to protect people, or face stronger checking.